Generative Engine Optimization (GEO): Ranking in ChatGPT, Perplexity and Gemini

Table of content

Generative engine optimization (GEO) is the fastest-shifting priority in B2B search strategy for 2026. As ChatGPT, Perplexity, Google Gemini, and Claude handle an increasing share of research queries from B2B buyers, the question is no longer only “do we rank on page one?” but “does our content get cited when AI systems synthesize an answer?”

These are two distinct questions with partially overlapping but not identical answers. A page that ranks position three on Google is not automatically cited by ChatGPT. A domain that Perplexity trusts heavily may not be the same one Google features in AI Overviews. The platforms use different retrieval mechanisms, trust signals, and freshness requirements. Treating GEO as a single channel leads to missed coverage across multiple high-intent touchpoints.

This guide covers what generative engine optimization actually is, how each major AI platform selects its sources, what the research shows about citation factors, and how B2B content teams can build a systematic GEO strategy on top of existing SEO foundations. If you are already building content clusters and optimizing page structure, a strong B2B content SEO strategy gives you the base required before GEO-specific tactics will compound effectively.

What Is Generative Engine Optimization (GEO)

Generative engine optimization is the practice of structuring, positioning, and distributing content so that AI language models select it as a cited source when generating answers. The term was introduced and formally defined in a 2023 research paper by Aggarwal et al. at Princeton University (Aggarwal et al., Princeton University, 2023), which examined how search generative engines retrieve and rank content compared to traditional search engines, and what content modifications produce measurable increases in AI-generated answer visibility.

The Technical Mechanism: How LLMs Retrieve and Select Sources

Large language models that support real-time web retrieval use a process called Retrieval-Augmented Generation (RAG). In this architecture, the AI system does not rely solely on its training data to answer a query. Instead, it retrieves relevant passages from a pre-indexed database, scores them for semantic relevance using vector embeddings, and synthesizes a response from the highest-scoring passages.

The critical implication for content teams: individual passages are scored independently. Each paragraph in your content is encoded as a separate vector and evaluated for semantic similarity to the query. A long, well-structured page does not automatically mean every paragraph is retrieved. A self-contained, precise paragraph that directly answers a specific question is more likely to be retrieved and cited than a dense page where the key insight is buried in paragraph 12 of a 4,000-word post.

This passage-level retrieval is fundamentally different from how Google evaluates pages. Google ranks the page as a unit. AI retrieval systems retrieve the passage as a unit. This distinction drives most of the practical differences between GEO and SEO tactics.

GEO vs. Traditional SEO: Key Differences

| Dimension | Traditional SEO | Generative Engine Optimization (GEO) |

|---|---|---|

| Target system | Google, Bing ranking algorithms | ChatGPT, Perplexity, Gemini, Claude retrieval systems |

| Evaluation unit | Entire page | Individual passage or paragraph |

| Primary output | Ranked link in SERP | Citation in synthesized AI answer |

| Traffic model | Click-through to website | Brand attribution; partial referral; zero-click impression |

| Top authority signal | Backlink quality and quantity | Brand mention frequency and domain trust |

| Content format advantage | Comprehensive long-form content | Self-contained, answer-first passages with data attribution |

| Freshness requirement | Moderate; evergreen content sustains | High for ChatGPT and Perplexity; lower for Claude |

| Keyword optimization | Critical for ranking | Neutral to negative; keyword-dense titles perform worse |

GEO vs. Google AI Overview Optimization

Google AI Overviews are a specific application of generative engine optimization, but they are not the same as GEO broadly. AI Overviews use Google’s core ranking infrastructure, meaning the same signals that produce strong organic rankings in Google Search also improve AI Overview citation eligibility. This is covered in detail in our guide to Google AI Overview optimization.

ChatGPT and Perplexity, by contrast, use different retrieval architectures and trust signals. Research on citation overlap found that only 12% of URLs cited by ChatGPT for a given query appear in Google’s top 10 results for the same query. Perplexity shows slightly higher overlap at around 33%. This means your Google rankings have limited predictive value for ChatGPT or Perplexity citation. A complete GEO strategy must be platform-aware rather than assuming that Google visibility transfers to AI search visibility.

Why GEO Matters for B2B Brands in 2026

The case for investing in GEO comes down to three data points that B2B marketing teams need to understand clearly before they can make informed channel decisions.

The Scale of AI Search in Early 2026

AI search platforms have grown from niche tools to mainstream research channels with significant reach among professional audiences. ChatGPT processes approximately 5.4 billion monthly visits as of early 2026. Perplexity handles over 100 million search queries per week. Google Gemini’s market share among AI platforms has grown from 5.7% to 21.5% over the course of 2025. Among B2B SaaS companies specifically, AI platforms now account for around 2.8% of all website visits, the highest AI referral rate of any sector.

Gartner research from 2026 found that 35% of Gen Z professionals and 19% of millennials now use AI tools as their first stop for research questions, ahead of traditional search engines. For B2B brands selling to early-career decision-makers and influencers, this demographic shift makes AI search a primary discovery channel, not a secondary one.

Why AI-Referred Traffic Converts at a Premium

Volume matters less than conversion quality for B2B lead generation, and AI-referred traffic punches well above its weight on quality metrics. ChatGPT-referred visitors spend an average of 15 minutes on-site compared to 8 minutes for Google organic visitors, view 12 pages per session versus 9, and convert to signups at roughly 14 to 16%. Claude-referred visitors convert at approximately 16.8%, the highest of any AI platform studied. Perplexity referrals show sign-up conversion rates around 10.5%.

The mechanism is straightforward: buyers who arrive via an AI-generated answer have already received a pre-qualified summary of the topic. They arrive on your site already oriented to the subject matter, with a specific intent to verify or go deeper rather than to evaluate whether you are relevant. This is a different and higher-quality intent state than keyword-matched organic search traffic.

The Dark AI Traffic Problem B2B Teams Are Missing

There is a significant measurement gap in how B2B teams assess their AI search performance. Research by Loamly analyzing nearly 450,000 sessions found that approximately 70.6% of AI-referred traffic arrives without a referrer header, making it invisible to standard Google Analytics 4 attribution. This traffic appears as direct or unknown in reporting, meaning most brands are substantially underreporting their AI channel performance.

The practical implication: if you check your GA4 data and see a small AI traffic number, your actual AI-referred visits are likely three to four times higher. B2B teams making budget decisions based on visible AI attribution data are working with an incomplete picture. Building brand mention tracking and manual AI query monitoring into your measurement protocol is essential before you can accurately evaluate GEO ROI.

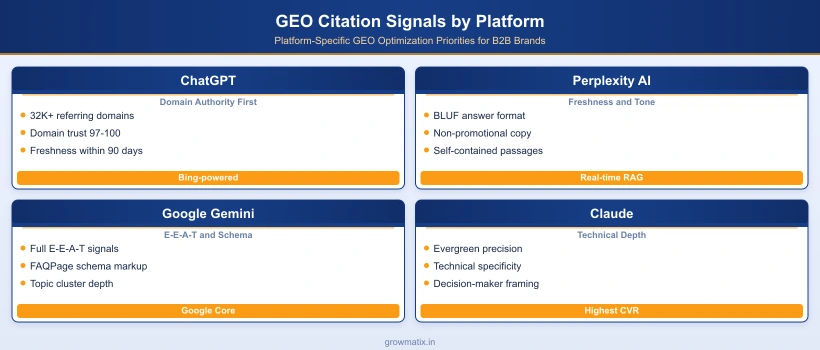

How ChatGPT Selects and Cites Sources

ChatGPT’s web-browsing mode uses Bing as its search provider, not Google. This matters for strategy because the underlying index, and the authority signals that inform citation selection, differ from Google’s. An SE Ranking analysis of 129,000 domains across 216,524 pages, published in December 2025, identified the strongest predictors of ChatGPT citation with substantial specificity.

Domain Authority: The Primary Citation Gate

Domain-level authority is the dominant citation predictor for ChatGPT, operating with notable threshold effects rather than linear correlations. Sites with 32,000 or more referring domains are 3.5 times more likely to be cited than sites below that threshold. Domain trust scores of 97 to 100 produce an average of 8.4 citations per domain analyzed, while domains with trust scores below 43 average only 1.6 citations. Organic traffic volume shows a similar threshold: sites with 190,000 or more monthly organic visitors see a step-change increase in citation frequency.

These thresholds suggest that for most early-stage B2B brands, direct ChatGPT citation optimization is premature. The foundational work of building domain authority through link acquisition, content depth, and brand recognition precedes and enables GEO at the ChatGPT level. Brands that already meet these thresholds can layer GEO-specific content tactics on top of existing authority.

Content Signals That Increase ChatGPT Citation Rates

Within the set of pages that meet the domain authority threshold, specific content characteristics further differentiate citation frequency:

- Expert quotes and attributed statements: Pages including direct expert quotes average 4.1 citations versus 2.4 for equivalent pages without attributed expertise.

- Statistical data points: Pages with 19 or more specific data points average 5.4 citations. Pages with minimal data points average 2.8.

- Section length: Sections of 120 to 180 words between headings average 4.6 citations. Sections under 50 words average 2.7. Excessively long sections also underperform.

- Content freshness: Pages updated within the past three months average 6.0 citations. Outdated pages average 3.6. Regular content refreshes, even minor ones, maintain citation eligibility.

- Page speed: First Contentful Paint under 0.4 seconds correlates with 6.7 average citations. Pages with FCP above 1.13 seconds average 2.1 citations.

Two counter-intuitive findings from the same research: FAQ schema markup correlated with slightly fewer citations (3.6 average) than pages without FAQ schema (4.2 average). Question-style H2 and H3 headings similarly underperformed compared to direct topic statements (3.4 citations vs. 4.3). These findings suggest that over-structuring content for a formulaic “answer-box” format does not help ChatGPT citation and may slightly hinder it.

Social Platforms and Review Sites as Citation Amplifiers

ChatGPT draws from a broader web than just your domain. Active presence on platforms that contribute to your brand’s information footprint amplifies citation frequency:

- Reddit presence: brands with 10 million or more mentions average 7.0 citations; minimal Reddit presence averages 1.8

- Quora presence: brands with 6.6 million or more mentions average 7.0 citations; minimal Quora presence averages 1.7

- Review platform listings: presence across Trustpilot, G2, Capterra, and similar platforms contributes to overall entity recognition

For B2B brands, this means strategic participation in community platforms, not just content publishing on owned domains. Contributing to relevant Reddit communities, publishing on LinkedIn, answering questions on Quora in your expertise area, and maintaining complete profiles on B2B review platforms all contribute to the information landscape that ChatGPT draws from when synthesizing responses about your category.

How Perplexity AI Ranks and Cites Content

Perplexity processes over 100 million search queries weekly and has grown from 9 million daily active users in late 2024 to 35 million by early 2025. Its user base skews heavily toward professionals: roughly 30% of Perplexity’s active users are in senior leadership roles, making it a disproportionately high-value platform for B2B brand visibility.

Perplexity Citation Architecture and Trust Signals

Perplexity’s citation model differs from ChatGPT’s in several important ways. Content freshness carries more weight in Perplexity’s retrieval system: for competitive informational queries, content updated within two to three days maintains optimal citation eligibility. Non-promotional tone is a strong positive signal, with Semrush’s analysis of AI citation patterns documenting a negative 26% correlation between promotional copy and AI citation probability. Perplexity’s retrieval system is particularly sensitive to content that reads like advertising rather than information.

Entity clarity is also more explicitly important in Perplexity than in other platforms. Content that clearly establishes what the publishing organization is, what it specializes in, and who authored the piece receives higher retrieval scores. For B2B brands, this means ensuring that every published page establishes organizational context clearly, not just within an About page but within individual article pages.

Tactical Optimization for Perplexity AI Visibility

The practical optimization checklist for Perplexity citation breaks into four areas:

- BLUF formatting (Bottom Line Up Front): Lead every section and every article with the direct answer before supporting detail. Perplexity’s retrieval system favors content where the key claim appears in the first two sentences of a section, not buried after contextual framing.

- Self-contained passages: Each paragraph should answer a specific question independently, without requiring the reader to understand paragraphs before or after it. Retrieval systems extract passages individually; passages that only make sense in context are less retrievable.

- Original data and proprietary research: Perplexity heavily weights content that introduces new information rather than synthesizing what is already published elsewhere. First-party research, named survey results, and original analysis produce stronger citation eligibility than explanatory summaries of third-party data.

- Third-party validation signals: Mentions of your brand and content in independent publications, podcasts, and industry media contribute to Perplexity’s entity trust scoring. Digital PR activities that produce mentions in authoritative contexts directly support Perplexity citation eligibility.

How Google Gemini and AI Overviews Select Content

Google Gemini and AI Overviews share underlying retrieval infrastructure with Google’s core search systems. According to Google Search Central’s documentation on AI features, AI Overviews use the same core ranking systems as standard Google Search, meaning E-E-A-T signals, topical authority, and structured content quality all apply directly. AI Overviews appeared for approximately 15.7% of Google searches in November 2025, with significant growth in commercial and transactional query coverage through the year.

Gemini Citation Factors for B2B Content

For B2B brands, the most actionable Gemini and AI Overview citation factors are:

- E-E-A-T depth: Named authors with verifiable credentials, inline citations from authoritative sources, and first-person practitioner observations all strengthen E-E-A-T signals that Google’s systems evaluate when selecting AI Overview sources.

- Semantic completeness: Pages covering a topic comprehensively, with H2 and H3 subheadings that address related questions a buyer would have, perform better than narrow single-question pages. AI Overviews select from pages that give Google a complete picture of the topic.

- Schema markup: FAQPage and Article schema directly assist Google’s AI extraction systems. Unlike ChatGPT where FAQ schema showed mixed results, Google’s AI Overview selection process gives explicit weight to structured data that maps content relationships.

- Topic cluster architecture: Multiple pages from the same domain can be cited within a single AI Overview if the domain’s topic cluster covers the subject comprehensively. This makes cluster-based content architecture directly valuable for AI Overview citation, not just for organic rankings.

The Shift to Commercial and Transactional AI Overviews

The query type distribution for Google AI Overviews shifted significantly through 2025. Informational queries fell from 91.3% of all AI Overview appearances in January 2025 to 57.1% in October 2025. Commercial intent queries grew from 8.2% to 18.6%. Transactional queries grew from 2.0% to 13.9%. For B2B brands that had deprioritized AI Overview optimization on the grounds that their commercial pages would not appear in AI summaries, this data represents a material change in the optimization opportunity.

How Claude Handles Source Attribution

Anthropic’s Claude represents a smaller but strategically significant AI citation channel for B2B brands. Claude’s traffic share among AI platforms is approximately 2%, but its referral quality metrics are the highest of any platform studied. Claude-referred visitors convert at an average of 16.8% on B2B sites, reflecting an audience that predominantly consists of technical professionals and sophisticated decision-makers who use Claude specifically for in-depth research tasks.

Claude Traffic Characteristics and Audience Profile

Claude’s citation behavior differs from ChatGPT and Perplexity in a meaningful way: it is substantially less dependent on content freshness. Well-researched, technically precise content that is 12 to 18 months old maintains strong Claude citation eligibility, whereas the same content would be deprioritized by ChatGPT’s freshness threshold. This makes Claude a platform where investment in evergreen authoritative content, rather than continuous publishing cadence, is the appropriate strategy.

Claude’s crawl-to-referral ratio is notably lower than other platforms (one referral per approximately 500,000 pages crawled), meaning that most content that Claude indexes does not generate a referral. The implication is not that Claude is a low-value channel, but that the content that does get cited by Claude tends to be genuinely authoritative within its subject area. Building for Claude citation means building for depth and accuracy, not for volume.

Optimizing B2B Content for Claude Citation

The highest-leverage optimizations for Claude citation eligibility are:

- Technical precision: Claude evaluates factual accuracy and terminological precision more explicitly than general-purpose information coverage. Content with precise, correct technical claims outperforms content that is accurate at a high level but vague on specifics.

- Decision-maker context: Claude’s user base skews toward people making complex technical or strategic decisions. Content framed around decision criteria, trade-offs, and implementation specifics, rather than introductory explanations, aligns with the research tasks Claude users bring to the platform.

- First-hand experience signals: Named practitioners, specific case observations, and implementation-level detail all signal Experience (the first E in E-E-A-T) that Claude’s retrieval systems reward in source selection.

- Crawl access: Ensure that ClaudeBot is not blocked in your robots.txt. Many B2B sites running aggressive bot-blocking rules inadvertently prevent Claude’s crawler from indexing their content entirely.

GEO Citation Factors: What the Research Actually Shows

The academic and industry research on GEO citation factors has produced a cleaner picture of what works than the early speculation suggested. The Princeton GEO paper, subsequent industry analyses by Ahrefs, Semrush, and BrightEdge, and SE Ranking’s large-scale domain study from December 2025 all converge on a consistent set of findings.

Tier 1 Signals: Strongest Cross-Platform Citation Predictors

The following factors show consistent positive correlation with AI citation across multiple platforms and multiple research methodologies:

- Domain authority and referring domain count: The single strongest predictor of ChatGPT citation; also significant for Perplexity and Gemini. Building referring domain authority through editorial link acquisition remains foundational for GEO, not just SEO.

- Brand mention frequency across independent sources: Ahrefs analysis of 76 million AI Overviews found brand mentions correlate at 0.664 with AI visibility, compared to 0.218 for backlinks alone. Brand mentions are three times more predictive of AI citation than link building, making digital PR and earned media coverage among the highest-ROI GEO investments.

- Non-promotional tone: Across ChatGPT, Perplexity, and Gemini, content written in an informational register outperforms promotional copy. AI retrieval systems identify and deprioritize sales-oriented language. Pages optimized primarily for conversion, with strong commercial framing, underperform on AI citation relative to their organic rankings.

- Expert attribution and data specificity: Content that names sources, cites specific figures with attribution, and includes direct practitioner observations consistently outperforms generic summaries. AI systems distinguish between content that adds information to the record and content that summarizes existing information.

Tier 2 Signals: Consistent Positive Impact

- Content freshness within 90 days (particularly important for ChatGPT and Perplexity)

- Passage-level answer clarity: self-contained paragraphs with a specific claim in the opening sentence

- Section length in the 120 to 180 word range between headings

- Page speed (FCP under 0.4 seconds)

- Entity clarity: clear organizational identity established within each page

- Media coverage and PR mentions that contribute to entity recognition

- Structured data markup (most effective for Gemini and AI Overviews)

Counter-Intuitive Findings: What Does Not Work for GEO

Several tactics that intuition and general SEO advice would suggest as GEO-positive have been found to have neutral or negative impact in large-scale research:

- Keyword-optimized titles: Pages with low keyword matching in their titles average 5.9 citations. Pages with highly keyword-optimized titles average 2.8. Broad, topic-describing titles outperform keyword-dense ones for AI citation.

- Question-style headings: Despite the logic that structuring headings as questions would help AI retrieval, pages using question-style H2 and H3 headings averaged fewer ChatGPT citations (3.4) than those using direct topic statements (4.3).

- FAQ schema specifically for ChatGPT: Pages with FAQ schema averaged 3.6 ChatGPT citations versus 4.2 for equivalent pages without FAQ schema. FAQ schema remains valuable for Google AI Overviews but should not be treated as a universal GEO tactic.

- Keyword stuffing: The Princeton GEO paper found that keyword-heavy rewrites produced a 10% decrease in AI visibility relative to the baseline, confirming that over-optimization is counterproductive in generative retrieval contexts.

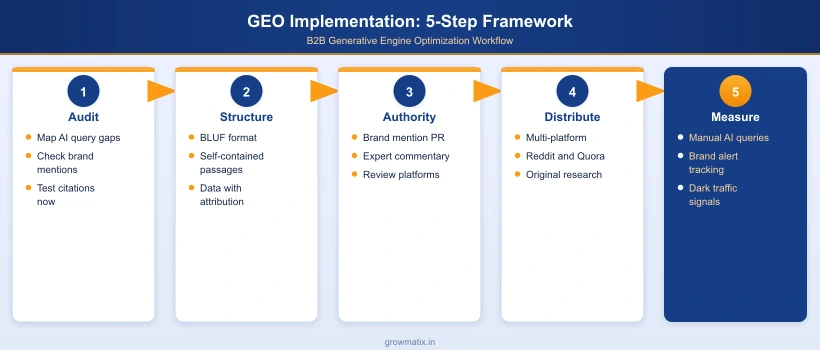

Practical GEO Strategy for B2B Content Teams

Translating GEO research into an executable B2B content program requires prioritizing actions based on your current domain authority, content infrastructure, and publishing capacity. Not all GEO tactics apply equally at every growth stage.

Passage-Level Content Architecture

The single most universally applicable GEO tactic, regardless of domain authority stage, is restructuring content at the passage level. Every H2 and H3 section should begin with a direct, self-contained answer to the question implied by the heading, in two to three sentences, before elaborating. This serves both AI retrieval and user experience simultaneously.

Audit your existing high-traffic pages with this test: read only the first sentence of each section. If those first sentences collectively summarize the article’s key claims, the content is structured for AI retrieval. If the first sentences are contextual framing (“In today’s complex digital landscape…”) rather than direct answers, the content structure is working against GEO citation eligibility regardless of topic coverage.

Entity Recognition and Brand Authority Building

Given that brand mentions correlate more strongly with AI citation than backlinks, B2B content teams need to expand their authority-building programs beyond traditional link acquisition:

- Original research and proprietary data: Publishing survey results, benchmark reports, or proprietary data analyses generates the type of citable information that other publications reference and that AI systems prioritize as a source of novel information.

- Expert commentary placement: Contributing expert quotes and commentary to industry publications, journalist queries, and roundup articles builds brand mentions in the credible, independent contexts that AI systems weight most heavily.

- Review platform completeness: For B2B SaaS brands specifically, complete and maintained profiles on G2, Capterra, and Trustpilot contribute to the entity recognition signals that ChatGPT citation correlates with.

- Community platform participation: Strategic Reddit and Quora participation, where brand representatives contribute genuine expertise rather than promotional content, builds the mention density that amplifies AI citation frequency over time.

For B2B marketing and SEO teams looking to execute this across a content program designed for both AI search and organic growth, our AI-ready B2B SEO services cover the full implementation across content architecture, entity building, and GEO measurement.

Measuring GEO Performance Across Platforms

Standard GA4 attribution significantly undercounts AI-referred traffic due to the dark AI traffic problem. Build a GEO measurement protocol that includes:

- Manual citation monitoring: Test your top 20 to 30 target queries monthly across ChatGPT, Perplexity, Gemini, and Claude. Record whether your brand appears, which URL is cited, and where your content ranks in the citation list.

- Brand mention tracking: Set up Ahrefs or Semrush brand alerts to monitor unlinked mentions in new publications. Growing brand mention volume across authoritative domains is a leading indicator of improving GEO citation eligibility.

- Direct traffic anomalies: AI-referred sessions that arrive without referrer headers show above-average engagement metrics (longer session duration, more pages per session) compared to true direct traffic. Monitoring for engagement anomalies in your direct channel can surface AI traffic that attribution tools miss.

- Conversion rate monitoring by channel: As AI referral volumes grow and become more reliably attributed, track conversion rates by AI source specifically. The conversion quality premium of AI traffic relative to organic makes this an increasingly important metric for B2B pipeline attribution.

Frequently Asked Questions

What is generative engine optimization (GEO)?

Generative engine optimization (GEO) is the practice of structuring and positioning content so that AI systems like ChatGPT, Perplexity, Google Gemini, and Claude select it as a cited source in their generated answers. Unlike traditional SEO which targets search engine rankings, GEO targets citation eligibility within AI-generated responses. The goal is not a link on a results page but inclusion in the synthesized answer itself, where your brand and content are attributed as a trusted source.

How is GEO different from traditional SEO?

Traditional SEO optimizes for search engine rankings by targeting algorithms that evaluate keywords, backlinks, and on-page signals to rank pages in a results list. GEO optimizes for AI citation by targeting retrieval systems that use semantic similarity, passage-level relevance, and entity trust to select sources for synthesized answers. The key structural difference: Google ranks pages and shows them to users who then click through. AI engines synthesize an answer and cite sources, meaning users may never visit your page at all. GEO builds brand visibility and topical authority across AI platforms, not just rankings.

How do I get cited by ChatGPT in its answers?

ChatGPT citations in web-browsing mode are driven primarily by domain authority: sites with high domain trust scores and large referring domain counts are cited disproportionately. Beyond domain authority, pages cited by ChatGPT tend to include expert quotes, multiple data points with specific figures, and content updated within the past three months. Engagement on platforms that ChatGPT draws from, such as Reddit, Quora, and review sites like G2 and Trustpilot, also correlates with ChatGPT citation frequency. There is no single shortcut: building domain authority and content credibility over time is the primary lever.

Does schema markup help with GEO and LLM citation?

Schema markup supports GEO primarily through two mechanisms: it helps AI systems understand entity relationships and page structure, and FAQPage schema maps question-and-answer pairs that AI retrieval systems can extract directly. However, research on ChatGPT citation patterns specifically found that FAQ schema correlated with slightly fewer citations, not more, suggesting that structured markup alone is not a citation determinant. The more consistently supported GEO tactic is passage-level content quality: self-contained paragraphs that answer a specific question without requiring surrounding context outperform schema markup as a citation driver.

How do I track whether AI platforms are citing my content?

Direct tracking is difficult because most AI-referred traffic arrives without a referrer header, making it invisible in standard analytics. Loamly research suggests around 70% of AI traffic falls into this "dark AI traffic" category. Practical tracking approaches include: manually querying target keywords in ChatGPT, Perplexity, Claude, and Gemini to check whether your brand or content is cited; monitoring brand mention volume in tools like Ahrefs or Semrush alerts; and watching for anomalies in direct traffic sessions with above-average engagement metrics, which often signal AI-referred visits. Dedicated AI visibility tools from BrightEdge and Semrush are expanding their tracking coverage in 2026.

How long does GEO take to show results?

GEO results follow a different timeline than traditional SEO. Domain-level authority signals, which are the strongest citation predictors for ChatGPT, build over months and years. Content structure and passage-level quality improvements can influence Perplexity and Gemini citation faster, sometimes within weeks of publication or update. The most realistic GEO timeline for B2B brands without established authority: three to six months to start seeing consistent brand mentions in AI responses for specific topic areas, and six to twelve months to build the domain-level trust signals that drive ChatGPT citation at scale.

Does GEO replace traditional SEO for B2B brands?

GEO does not replace traditional SEO; it extends it. Traditional organic search still accounts for roughly 48% of global internet traffic, while AI-referred traffic remains under 1%. However, AI search is growing rapidly and the conversion quality of AI-referred visitors is significantly higher than organic search visitors across multiple studies. The practical approach for B2B brands is to build on existing SEO foundations by adding GEO-specific elements: passage-level content architecture, entity recognition building, multi-platform distribution, and regular content freshness updates. GEO and SEO share most of their foundational requirements and optimize together more efficiently than separately.

Ready to Get Cited by ChatGPT, Perplexity and Gemini?

Growmatix builds B2B content programs with the passage architecture, entity authority, and platform-specific optimizations that turn AI search into a consistent citation and pipeline channel.

Explore Our SEO Services