B2B Site Architecture That Google and LLMs Understand

Table of content

Site architecture is the most undervalued technical SEO lever for B2B companies. Most B2B sites accumulate pages over years without a deliberate structure: service pages at inconsistent depths, blog posts with no internal linking logic, and URLs that do not reflect any topical hierarchy. Google and AI systems read this disorganization directly from crawl patterns.

The result is predictable: topical authority is diluted across dozens of loosely connected pages, crawl budget is wasted on low-value URLs, and the pages that should rank for high-intent B2B queries lack the internal link signals that push them up in the priority queue.

This guide covers how to design and implement a B2B site architecture that search engines and LLMs can navigate efficiently, the specific silo model that works for B2B, URL hierarchy best practices, crawl budget optimization for enterprise sites, and an audit process for fixing existing structural problems. It builds on the broader principles in any solid B2B technical SEO guide, but goes deeper on the information architecture layer specifically.

Why Site Architecture Is a Core B2B SEO Factor in 2026

Site architecture affects three things that directly influence B2B search rankings:

Crawl efficiency. Googlebot allocates a crawl budget to each site. Enterprise B2B sites with hundreds of pages, filter parameters, duplicate URLs, and deeply nested content waste significant crawl budget on pages that will never rank. When crawl budget is wasted, pages that matter get crawled less frequently. Slow crawl recurrence means slower indexation of new content and slower recovery when content is updated.

PageRank distribution. Internal links carry PageRank (link equity). The architecture of your site determines how PageRank flows. A flat, unstructured site distributes equity across all pages equally, including pages that have no ranking potential. A silo-structured site concentrates equity toward hub pages and key service pages, amplifying the ranking power of pages that generate revenue.

Topical authority signals. According to Search Engine Land’s site architecture guide, search engines evaluate topical signals at the domain level, not just the page level. A B2B website where all SEO-related content is clearly grouped under one silo signals deeper expertise than a site where SEO, PPC, and social media content is intermixed without structure. This topical concentration is a direct input into how Google evaluates E-E-A-T at the site level.

For LLM-based search systems (AI Overviews, Perplexity, ChatGPT with web access), architecture clarity is even more important. AI systems parse site-level signals during indexation to understand what a domain is an authority on. A B2B site with a clean, well-structured architecture sends clearer entity and topical signals than one with scattered, unconnected pages.

The Silo Structure Model: How B2B Websites Should Organize Content

Silo structure groups website content into thematic buckets where each bucket (silo) covers a distinct topic area. Within a silo, pages link heavily to each other and back to the hub page. Cross-silo linking is used strategically but sparingly, to avoid diluting topical signals.

For B2B companies, silos typically map to one of three frameworks:

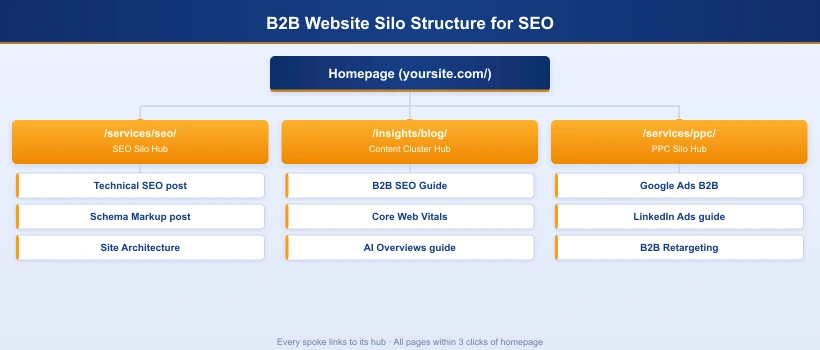

Framework 1: Service-Based Silos

Best for B2B agencies, consultancies, and professional services firms with multiple distinct offerings.

- Hub page: /services/ (overview of all services)

- Silo 1: /services/seo/ with spokes: /insights/blog/[all-seo-posts]

- Silo 2: /services/ppc/ with spokes: /insights/blog/[all-ppc-posts]

- Silo 3: /services/content-marketing/ with spokes: /insights/blog/[all-content-posts]

Framework 2: Buyer Persona Silos

Best for SaaS companies and platforms serving multiple audience segments.

- Hub page: /solutions/

- Silo 1: /solutions/for-enterprise/ with all enterprise-specific pages

- Silo 2: /solutions/for-mid-market/ with all mid-market pages

- Silo 3: /solutions/for-startups/ with startup-focused content

Framework 3: Use Case Silos

Best for horizontal SaaS products where the same platform solves different problems for different users.

- Hub page: /use-cases/

- Silo 1: /use-cases/sales-pipeline-management/

- Silo 2: /use-cases/customer-onboarding/

- Silo 3: /use-cases/revenue-operations/

Whichever framework fits your business model, the silo structure principle is the same: every piece of content belongs to one primary silo, is internally linked within that silo, and links back to the silo’s hub page.

How to Build a URL Hierarchy That Signals Topical Authority

Your URL structure should be a readable map of your site architecture. When Google and AI crawlers see /services/seo/technical-seo-audit/, they immediately understand the page hierarchy: it is a child of the SEO service hub, which is a child of the services hub. This hierarchical clarity is a topical signal.

B2B URL Structure Best Practices

| Page Type | Recommended URL Pattern | Avoid |

|---|---|---|

| Homepage | yoursite.com/ | yoursite.com/home/ |

| Service hub | /services/ | /what-we-do/ or /offerings/ |

| Individual service | /services/b2b-seo/ | /b2b-seo-services-agency-2026/ |

| Blog hub | /insights/blog/ or /blog/ | /news-updates/ or /resources/articles/ |

| Blog post | /insights/blog/b2b-technical-seo-audit/ | /insights/blog/2024/march/b2b-seo-audit/ |

| Case study | /case-studies/saas-company-seo-results/ | /portfolio/project-245/ |

| Landing page | /lp/b2b-seo-audit/ (if noindexed) | Should be noindexed if PPC-only |

Key URL rules for B2B sites:

- Never include the year in blog post slugs. Use evergreen slugs and update content in place

- Keep URLs lowercase with hyphens only. Underscores and mixed case create duplicate URL risks

- Maximum 3 slash levels for most pages: /hub/sub-hub/page/ is the recommended maximum

- Use the primary keyword in the slug, but do not keyword-stuff: /services/b2b-seo/ beats /b2b-seo-agency-services-company-2026/

- Never change established URLs without a 301 redirect. URL changes reset backlink equity accumulation

Crawl Budget Optimization for Enterprise B2B Sites

Crawl budget matters for B2B enterprise sites with over 500 pages, but it also matters for smaller sites that have a high ratio of low-quality URLs. According to Google Search Central’s crawl budget documentation, Googlebot determines crawl budget based on crawl capacity (server health, response times) and crawl demand (how often Google estimates pages need refreshing based on PageRank and change frequency).

Where B2B Sites Waste Crawl Budget

- Filter and faceted navigation URLs: /services/?industry=saas&size=enterprise creates millions of parameter combinations that Googlebot will attempt to crawl. Block with robots.txt disallow rules or Google Search Console URL parameter settings

- Paginated archives: /blog/page/2/ through /blog/page/47/ generates crawl demand for low-priority pages. Implement

rel="next"/rel="prev"or limit pagination to three pages and link to “see all” instead - Thank-you and confirmation pages: /thank-you-for-contacting-us/ should be noindexed. These pages have no SEO value and consume crawl budget

- Staging and test pages: If staging is on the same domain or accessible to crawlers, it diverts significant crawl budget. Block via robots.txt or require authentication

- Redirect chains: A URL that redirects to another URL that redirects to a third forces Googlebot to follow multiple hops. Flatten redirects to point directly to the final destination

- Broken internal links (404s): Each 404 response wastes a crawl request. Monthly broken link audits with tools like Screaming Frog or Ahrefs should be standard maintenance

How to Increase Crawl Frequency for Priority Pages

- Add internal links from frequently crawled pages to the pages you want crawled more often. Pages linked from the homepage or high-traffic blog posts get crawled more frequently

- Submit the page URL in Google Search Console after significant content updates using the URL inspection tool’s “Request Indexing” function

- Improve server response time for priority pages. Pages that respond quickly get more crawl budget allocated because Googlebot can process them faster without overloading your server

- Keep the sitemap current. Submit an updated sitemap.xml within 24 hours of publishing new content or making major page updates

LLM-Friendly Architecture: What AI Systems Need to Understand Your B2B Site

LLMs and AI search systems (Google Gemini, Perplexity, ChatGPT with web access) have specific requirements that differ slightly from traditional Googlebot requirements. Understanding these requirements allows B2B teams to architect sites that perform well in both traditional and AI-powered search.

What Makes a B2B Site LLM-Readable

Clear topical scope signals. AI systems analyze your domain to determine what topics you are authoritative on before deciding whether to cite you. A site where the homepage, navigation, and top-level URLs clearly communicate “B2B digital marketing agency specializing in SEO” sends clearer topical scope than one where the homepage is a generic welcome page. Your site architecture is how you communicate scope at scale.

Entity definition at the page level. Every page should define the entities it discusses: what the service is, who it serves, what problems it solves, and how it compares to alternatives. AI systems extract this context from headings, structured data, and the first 200 words of content. Pages that define entities clearly at the top are more likely to be cited accurately.

Direct answer blocks. Add a brief (40-80 word) direct answer to the primary question a page addresses, placed in the first or second paragraph. This mirrors the format AI systems use when generating answers and increases the likelihood of verbatim or near-verbatim citation. For a page about “how to audit B2B site architecture,” the first paragraph should directly answer that question before expanding into detail.

Clean robots.txt that allows AI crawlers. Multiple AI systems have their own crawlers: GPTBot (OpenAI), ClaudeBot (Anthropic), PerplexityBot, and GoogleBot for Gemini. Many B2B sites have robots.txt rules that accidentally block these crawlers. Audit your robots.txt to ensure you are not blocking crawlers from AI platforms you want to appear in. Block only crawlers from platforms that do not respect robots.txt or that you have a specific reason to exclude.

Schema markup as entity context. As covered in the schema markup strategy for B2B service pages, structured data is the most direct technical signal you can provide to AI systems about what your pages are and who they serve. Service, Organization, and FAQPage schemas are the highest-priority types for B2B sites targeting AI-powered search features.

Internal Linking as the Backbone of Site Architecture

Site architecture exists on paper. Internal linking is what makes it real. Without deliberate internal links, even a perfectly designed silo structure fails to concentrate PageRank and topical authority where it belongs. Internal links are the physical implementation of your architectural plan.

The key principles for B2B internal linking:

- Every spoke page must link back to its hub. The internal link should appear naturally in the body content, not just in breadcrumbs or navigation

- Hub pages must link to all their spokes. This creates the bidirectional relationship that silo structure requires

- Anchor text should be descriptive and keyword-rich. “SEO for B2B companies” is better anchor text than “this page” or “click here”

- Newly published pages should receive internal links from 2-3 existing pages immediately. A new page with no internal links pointing to it is effectively invisible to Googlebot until it appears in the sitemap

- High-traffic pages are your best internal link sources. A link from your most-visited blog post carries more PageRank than a link from a page with no organic traffic

For teams building out an internal linking strategy for B2B websites systematically, the starting point is always the content audit: map all existing pages, identify their silo membership, and catalog which hub-to-spoke and spoke-to-hub links are missing. This gap analysis drives the internal linking sprint.

B2B Site Navigation: What Most Companies Get Wrong

Navigation architecture is inseparable from site architecture. The navigation menu is one of the highest-PageRank internal link sources on your site (every page typically includes it), and its structure directly signals your content hierarchy to crawlers.

Common B2B Navigation Mistakes

| Mistake | SEO Impact | Fix |

|---|---|---|

| Mega menus with 30+ links | PageRank diluted across too many pages; crawl signal weakened | Limit primary nav to 5-7 top-level items; move secondary links to footer or content |

| JavaScript-only navigation | Googlebot may not render JS nav; links not followed; crawl paths broken | Ensure nav links exist in static HTML; use progressive enhancement |

| No link to blog/insights in main nav | Blog pages receive less PageRank; content cluster underperforms | Add Insights or Blog to primary nav to pass PageRank to content cluster hub |

| Service pages not in nav | Key commercial pages receive reduced crawl priority | Include all primary service pages in nav or in a linked Services hub page in nav |

| Using nofollow on internal nav links | Blocks PageRank flow to target pages | Remove nofollow from internal navigation links; nofollow is for external links |

| Footer with duplicate full navigation | Duplicate link targets; Google may discount footer link equity | Footer should contain secondary links (privacy, contact, social) not duplicates of main nav |

How to Audit and Fix Your B2B Site Architecture

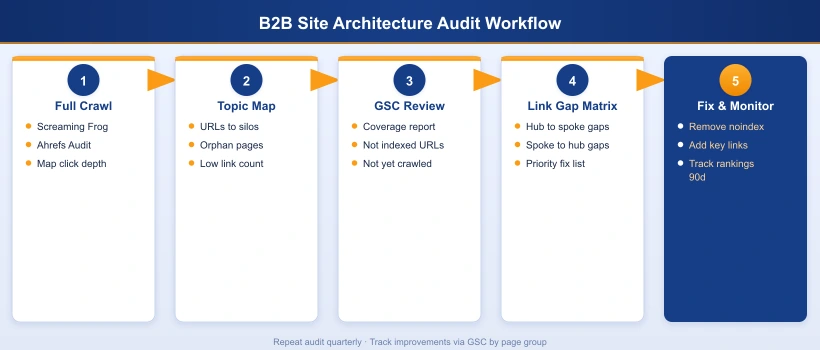

A site architecture audit produces a prioritized action list: which structural problems are costing you the most ranking potential, and in what order to fix them.

The B2B Site Architecture Audit Process

Step 1: Full crawl with Screaming Frog or Ahrefs Site Audit

Run a complete crawl of your site. Export all URLs with their page depth (click depth from homepage), HTTP status, internal link count, and inbound internal link count. Sort by page depth to identify pages buried more than three clicks deep.

Step 2: Topic clustering

Map every indexed URL to a primary topic silo. Use a spreadsheet. For each URL, record: URL, page type (service, blog, case study, landing page), silo assignment, current page depth, and inbound internal link count. Pages with zero or one inbound internal links are your priority fix targets.

Step 3: URL structure review

Flag URLs that contain years, are unnecessarily long (over 80 characters), use underscores instead of hyphens, or do not reflect their page type. Prioritize fixing URL structure only if the page has no established backlinks. For pages with backlinks, fix the internal link structure rather than changing the URL and risking redirect chain problems.

Step 4: Crawl waste identification

In Google Search Console > Coverage, review the “Crawled but not indexed” and “Discovered but not crawled” lists. Crawled but not indexed indicates quality or content issues on those pages. Discovered but not crawled indicates crawl budget is being exhausted elsewhere before Googlebot reaches those pages. Prioritize fixing the latter category by adding internal links to those pages from high-traffic sources.

Step 5: Internal link gap matrix

For each silo, verify that every spoke page links back to its hub, and every hub page links to all its spokes. Document gaps in a matrix. Resolve missing links with content edits to existing pages rather than creating new pages.

Step 6: Implement fixes in order of impact

Prioritize fixes in this order: (1) Remove crawl waste (noindex thank-you pages, block filter URLs), (2) Flatten redirect chains, (3) Add internal links to deeply buried priority pages, (4) Add missing hub-to-spoke and spoke-to-hub links, (5) Fix URL structures where no backlinks are at risk, (6) Improve navigation architecture to better distribute PageRank.

Frequently Asked Questions

How should a B2B website be structured for SEO?

A B2B website should use a hub-and-spoke silo structure: a small number of hub pages (service categories, topic clusters) supported by deeply linked spoke pages (individual service pages, blog posts, case studies). The URL hierarchy should reflect this structure: /services/ as the hub, /services/seo/ and /services/ppc/ as first-level spokes, and /insights/blog/ as the content cluster hub. Every page should be reachable within three clicks from the homepage, and internal links should flow from spokes back to hubs to concentrate topical authority where it has the most ranking impact.

What is silo structure in SEO and does it work for B2B websites?

Silo structure is a content organization method where related pages are grouped into thematic buckets (silos), each reinforcing a specific topic. Internal links within each silo are prioritized, with limited cross-silo linking to prevent topic dilution. For B2B websites, silo structure works best when the silos map directly to the services you offer or the buyer personas you target. A B2B firm offering SEO, PPC, and content marketing would have three silos, each with its own hub page and 5-10 supporting blog posts and case studies. Google can more confidently assign topical authority to your site when the content architecture clearly signals what your core subjects are.

How do I optimize crawl budget for a B2B enterprise website?

Crawl budget optimization for B2B enterprise sites focuses on three areas: reducing crawl waste, increasing crawl frequency for priority pages, and improving page discovery efficiency. Reduce crawl waste by noindexing or blocking filter pages, pagination duplicates, thank-you pages, and admin URLs. Increase priority page crawl frequency by strengthening internal links to them (more internal links = higher crawl priority signal). Improve discovery by submitting an updated sitemap after every publish and keeping site depth under three clicks for all pages you want indexed. Use Google Search Console Coverage report to identify pages Google is crawling but not indexing, which indicates architecture or quality issues.

What makes a website LLM-friendly in 2026?

LLM-friendly site architecture shares most of its properties with solid traditional SEO architecture, but with a few additional requirements. First, content should have clear topical organization that AI systems can parse from headings, URL structure, and internal linking patterns. Second, pages should define entities explicitly: who you are, what you offer, who you serve, and how you compare to alternatives. Third, structured data (schema markup) provides machine-readable context that LLMs use when deciding which sources to cite. Fourth, a publicly accessible sitemap.xml and robots.txt that does not block AI crawlers (Googlebot, GPTBot, ClaudeBot, PerplexityBot) ensures the content is discoverable. Fifth, answer blocks at the top of key pages, written as direct responses to specific questions, align with how LLMs extract information for citation.

How many clicks deep should B2B website pages be?

Pages should be accessible within three clicks from the homepage. This is the standard crawl efficiency benchmark for B2B websites of any size. A page that requires five or more clicks to reach is effectively buried in terms of crawl priority: Googlebot and AI crawlers spend less time and crawl budget on deeply nested pages. Map your current click depth using a site crawler (Screaming Frog, Ahrefs Site Audit, Sitebulb) and identify any commercial or service pages beyond three clicks. Elevate these by adding internal links from hub pages, the homepage navigation, and frequently crawled blog posts.

Should B2B websites use year-based URLs for blog posts?

No. Year-based URLs like /blog/2024/b2b-seo-guide/ create two problems: they signal content aging to both users and crawlers, and they require URL changes or redirects when content is updated for a new year. Use keyword-rich, evergreen slugs: /insights/blog/b2b-seo-guide/. This slug remains stable across updates, accumulates backlink equity over time, and does not trigger perceived freshness concerns. The only exception is when the year is a meaningful part of the topic itself, such as annual industry reports, but even these are better served by updating the existing URL rather than creating a new year-based one.

How do I fix a flat B2B site architecture that has no topic structure?

A flat architecture fix involves three phases. Phase 1: Audit and categorize all existing content by topic using a site crawler and a spreadsheet. Group pages into 3-5 primary topic silos. Phase 2: Create or identify hub pages for each silo. If hub pages do not exist, create them. Ensure each hub page is comprehensively written, internally links to all spoke pages, and is linked from the main navigation. Phase 3: Add or strengthen internal links from spoke pages back to their hub, and from hubs to the homepage. This restructuring phase usually takes 4-8 weeks but generates measurable ranking improvements within 60-90 days for competitive B2B keywords, as Google recrawls and reassesses topical authority.

Our B2B SEO team audits your site architecture, identifies crawl waste, restructures your content silos, and builds the internal linking strategy your site needs to compete for high-intent B2B search queries.