The Complete B2B Technical SEO Playbook for 2026

Table of content

Technical SEO for B2B companies operates under constraints that most consumer-facing guides ignore. B2B sites carry more complex page architectures, longer buyer journeys, and content structures that demand precise crawl hierarchies across hundreds of URLs. In 2026, with Google AI Overviews and LLM crawlers now part of the indexing equation, technical SEO determines not only whether your content ranks but whether it is machine-readable enough to be cited in AI-generated answers.

This playbook covers the complete B2B technical SEO stack: Core Web Vitals, site architecture, schema markup, JavaScript rendering, and a structured quarterly audit process.

What Is B2B Technical SEO?

B2B technical SEO is the optimization of a website’s infrastructure so search engines can efficiently discover, crawl, render, and index its content. It is the foundation layer that determines whether your content can rank, independent of its quality.

While the fundamentals overlap with B2C, B2B sites face four distinct challenges that make technical optimization more demanding:

- Architectural complexity: Multiple service lines, industry verticals, and buyer persona paths create deeply nested URL structures that strain crawl efficiency and dilute PageRank

- JavaScript-heavy builds: B2B SaaS marketing sites commonly use React or Vue, creating rendering gaps between HTML and JavaScript indexation waves

- Schema gaps: Service pages, case studies, and team pages are rarely marked up with structured data, limiting rich result and AI Overview eligibility

- LLM indexing requirements: AI crawlers need well-structured, unambiguous content to cite accurately. Technical clarity now directly affects AI-generated visibility

Technical SEO does not include content writing, keyword research, or link acquisition. It is the structural prerequisite for all other SEO work to deliver results.

Core Web Vitals and B2B Page Performance

Google’s Core Web Vitals became a confirmed ranking factor with the Page Experience update in 2021 and remain an active ranking signal in competitive B2B search niches in 2026. The three current metrics:

| Metric | What It Measures | Good Threshold | Common B2B Failure Point |

|---|---|---|---|

| LCP (Largest Contentful Paint) | Time to render the largest visible element | Under 2.5 seconds | Unoptimized hero images, render-blocking fonts |

| CLS (Cumulative Layout Shift) | Visual stability during page load | Under 0.1 | Late-loading navbars, injected banners |

| INP (Interaction to Next Paint) | Responsiveness to user input | Under 200ms | Heavy JavaScript event handlers on interactive elements |

CWV data is available per URL group in Google Search Console’s Core Web Vitals report, sourced from Chrome User Experience Report (CrUX) field data. Prioritize fixing pages with LCP above 4 seconds first. These carry the highest ranking uplift potential per fix.

For a full breakdown of how to diagnose and fix Core Web Vitals on B2B sites: Core Web Vitals for B2B Websites: What Actually Moves Rankings.

B2B Site Architecture: Structure for Google and LLMs

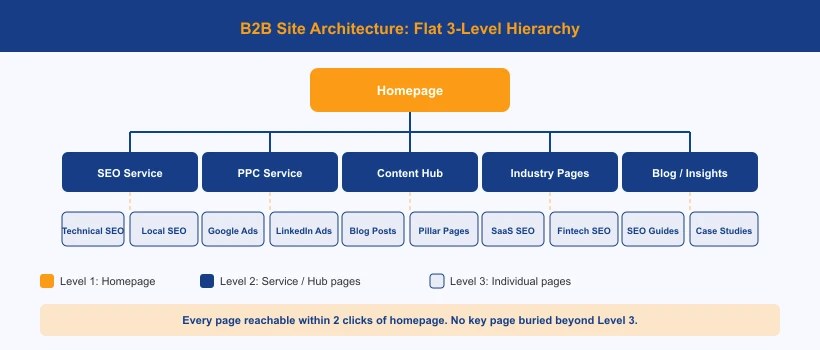

Site architecture controls how Googlebot distributes crawl budget and how LLM indexing crawlers interpret your content hierarchy. A flat architecture where key pages sit within two clicks of the homepage consistently outperforms deeply nested structures on both crawl efficiency and PageRank distribution.

The recommended B2B site architecture follows a clear five-level hierarchy:

- Homepage: links to all major service category pages and primary content hubs

- Service category pages: one per major offering (e.g. /service/seo/, /service/ppc/)

- Individual service pages: targeting specific, high-intent search queries with commercial schema

- Industry or vertical landing pages: targeting by sector (e.g. /seo-for-saas-companies/)

- Blog or insights hub: topic cluster content organized under /insights/blog/

URL Structure Rules for B2B Sites

- Keep URLs under 100 characters

- Lowercase, hyphenated slugs only. No underscores, no camelCase

- No query parameter strings in page URLs (?id=123 or ?category=seo)

- No subfolders deeper than three levels for service and blog content

- No dates in blog URL slugs. They make content appear outdated in SERPs

Crawl Budget Management

B2B sites with more than 300 indexed pages need to actively manage crawl budget. The primary sources of crawl waste to eliminate:

- Faceted navigation and filter URLs (e.g. /blog/?category=seo&year=2025)

- Tag and category archive pages that duplicate main page content

- Paginated archive pages beyond page two (add noindex if content is thin)

- Multiple URL variations resolving to the same canonical page

For a detailed guide on B2B information architecture and silo structure: B2B Site Architecture That Google and LLMs Understand.

Schema Markup on B2B Service Pages

Service pages are the highest commercial-intent pages on any B2B website, yet they are consistently the least optimized for structured data. Adding Service schema with correct attributes directly increases eligibility for rich results and AI Overview citations.

| Schema Type | Required Fields | Purpose |

|---|---|---|

| Service | name, description, provider, areaServed, serviceType | Identifies the page as a specific service offering |

| Organization | @id, name, url, address, sameAs | Identifies and establishes the service provider entity |

| BreadcrumbList | itemListElement with position and name | Enables breadcrumb rich results in SERPs |

| FAQPage | mainEntity with Question and Answer pairs | Activates FAQ rich results and AI Overview eligibility |

Implement all structured data as JSON-LD in a single script block using the @graph array format. This allows validator.schema.org to identify each type as a separate card. For a complete B2B service page schema implementation guide: Schema Markup Strategy for B2B Service Pages.

JavaScript SEO for B2B SaaS and Tech Companies

B2B SaaS marketing sites built on React, Next.js, Angular, or Vue face JavaScript SEO risks that can suppress rankings entirely if not addressed at the infrastructure level.

The core problem: Googlebot crawls in two waves. The first fetches raw HTML. The second renders JavaScript. There is typically a delay of hours to days between them. Content that only exists after client-side JavaScript execution may not be indexed correctly, or may be indexed with significant delay that competitors exploit.

Most Common B2B SaaS JavaScript SEO Failures

- Navigation menus rendered via JS: Googlebot cannot follow these links on the first HTML crawl wave. Internal link equity fails to pass

- Blog content lazy-loaded after scroll events: Posts may never be fully indexed

- Feature tables or pricing injected via API calls at runtime: Critical service page content is missing from HTML entirely

- Meta tags dynamically injected after page load: Title and description are invisible to first-wave crawlers

- Internal links inside JavaScript components: PageRank distribution is broken across the site

Use the URL Inspection tool in Google Search Console and compare the “Googlebot view” rendered screenshot against your live page. Any visible content gap confirms a JavaScript rendering issue. For a full diagnostic and fix framework: JavaScript SEO: What B2B SaaS Companies Get Wrong.

How to Run a B2B Technical SEO Audit

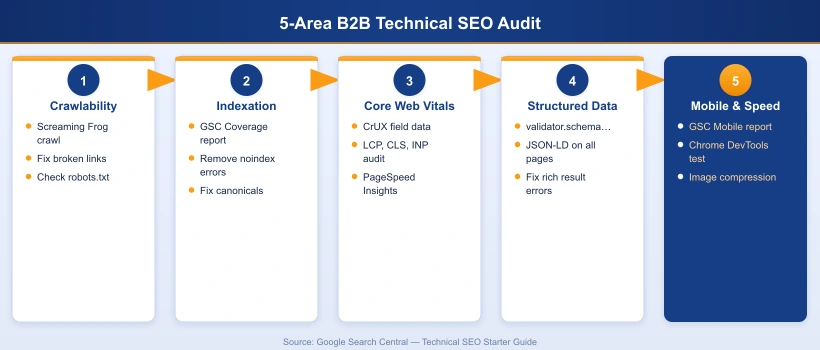

A technical SEO audit for B2B websites covers seven core areas. Run this process quarterly and immediately after any major site migration, CMS update, or significant template change.

- Crawlability audit: Run Screaming Frog against the full domain. Identify pages blocked by robots.txt or noindex, redirect chains with three or more hops, broken internal links (4xx responses), and non-canonical duplicate pages.

- Indexation audit: In Google Search Console Coverage report, review “Discovered but not indexed” and “Crawled but not indexed” segments. These indicate pages Google has deprioritized due to thin content, duplicate signals, or crawl budget exhaustion.

- Core Web Vitals audit: Pull CrUX field data from the GSC Core Web Vitals report segmented by URL group. Pages with LCP above 4 seconds carry the highest ranking impact per fix. Prioritize those first.

- Structured data audit: Run all key URLs through validator.schema.org. Confirm each schema type appears as a separate card with zero errors or warnings.

- Duplicate content audit: Check for canonical tag inconsistencies, www vs non-www, HTTP vs HTTPS, and trailing slash URL variations. Every variation should resolve to a single canonical URL via a direct 301 redirect.

- Internal linking audit: In Screaming Frog’s Inlinks tab, identify orphan pages with zero internal links. These receive no PageRank and cannot rank for competitive queries regardless of content quality.

- Mobile usability audit: Review the GSC Mobile Usability report. Test key landing pages in Chrome DevTools with mobile emulation. Prioritize any errors on service pages and the blog index.

For a complete step-by-step B2B technical SEO audit template with tooling workflow: How to Run a B2B Technical SEO Audit in 2026.

B2B Technical SEO Checklist for 2026

Use this checklist during quarterly audits and after any major site changes. Each item maps to a specific audit area above.

Crawl and Indexation

- robots.txt does not block any key service pages, blog posts, or the sitemap

- XML sitemap is current and submitted in Google Search Console

- No URLs in the sitemap return 4xx or 5xx status codes

- Canonical tags are correctly set on all pages (self-referencing on primary pages)

- All redirects resolve in a single hop directly to the final destination URL

- No duplicate pages indexed via URL parameter variations

Site Speed and Core Web Vitals

- LCP under 2.5 seconds on all service pages and top blog posts

- CLS under 0.1 on all pages

- INP under 200ms on key interactive pages

- All images served in WebP format with explicit width and height attributes

- Lazy loading applied to below-fold images only (never to the LCP element)

- No render-blocking scripts loaded in the document head

Site Architecture

- No key content pages more than three clicks from the homepage

- URL slugs are descriptive, lowercase, and hyphenated throughout

- No thin category or tag archive pages being indexed

- Internal links use descriptive keyword-rich anchor text

- No orphan pages. Every content page has at least one internal link pointing to it

Structured Data

- Article schema on all blog posts (datePublished, dateModified, author, publisher)

- Service schema on all service pages with required fields

- Organization schema present sitewide with consistent @id anchor

- FAQPage schema on all pages with a visible FAQ section

- BreadcrumbList schema on all inner pages

- Zero errors on validator.schema.org for all key URLs

Build on This with Semantic SEO

Technical health is necessary but not sufficient. The next layer is topical authority: structuring your content so Google understands the breadth and depth of your subject-matter expertise. Read our complete guide to semantic SEO to learn how topic clusters, entity optimisation, and knowledge graph alignment compound your technical work into rankings that hold.

Prefer a Managed SEO Engagement?

If your team lacks the bandwidth or in-house expertise to execute a full technical audit and fix cycle, Growmatix’s B2B SEO services cover everything from crawl remediation to Core Web Vitals optimisation and structured data implementation.

Frequently Asked Questions

What is the difference between technical SEO and on-page SEO?

Technical SEO addresses the infrastructure layer: crawlability, indexation, site speed, structured data, and site architecture. On-page SEO addresses the content layer: keyword optimization, headings, internal links, and meta tags. Both are required for sustained rankings but operate at different levels of the website.

How often should a B2B company run a technical SEO audit?

Quarterly for most B2B sites, and immediately after any major site migration, CMS update, or significant template change. Google Search Console's Core Web Vitals and Coverage reports serve as ongoing monitors between full audits.

Does technical SEO affect Google AI Overviews?

Yes. AI Overviews source content from pages that Google has fully crawled, rendered, and indexed. Pages that fail Core Web Vitals, have incomplete JavaScript rendering, or lack structured data are significantly less likely to appear in AI-generated answers.

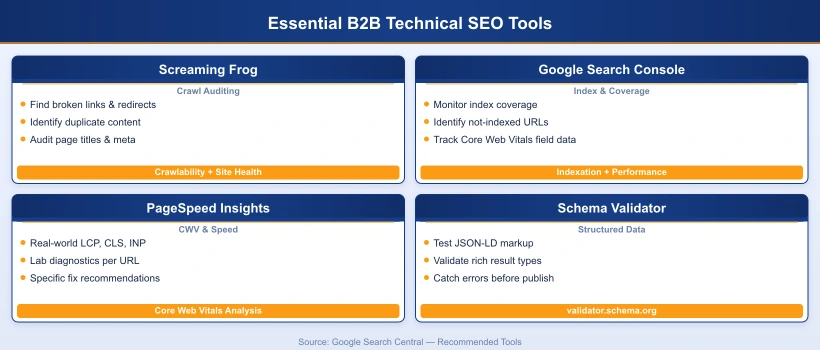

What tools are recommended for B2B technical SEO audits?

Screaming Frog SEO Spider for full-site crawl analysis, Google Search Console for indexation and Core Web Vitals field data, Ahrefs Site Audit for automated ongoing monitoring, and validator.schema.org for structured data validation.

How does site architecture affect B2B SEO performance?

Site architecture directly controls how efficiently Googlebot distributes crawl budget across your pages. A flat, logically organized structure ensures high-value service pages receive adequate crawl frequency. Deep or fragmented architectures result in key pages being crawled infrequently or missed entirely.

What is crawl budget and does it matter for B2B websites?

Crawl budget is the number of pages Googlebot crawls within a given timeframe. For B2B sites with 300 or more pages, crawl budget management is critical. Wasting budget on duplicate URLs, thin archive pages, or parameter variations means important service pages and blog posts are crawled less frequently.

Our B2B SEO team audits your technical setup, identifies ranking blockers, and builds a prioritized fix roadmap so your content can actually get found.